There are no doubts that Dynamics 365 Business Central has extremely changed the partner’s habits in creating and distributing their ERP solutions. Dynamics NAV was a fantastic ERP, with an extremely high adoption, global distribution, and a quick learning curve for customers and partners.

Dynamics 365 Business Central, despite its core, which is based on the Dynamics NAV business logic breaks the old monolith and goes to a SaaS road. Yes, on-premise is still live, it will be the first choice for many customers for a lot of years, it will drive the revenue, I think, but there are no doubts that Microsoft plans and strategies to have the cloud (and, specifically, the SaaS platform) as their main goal.

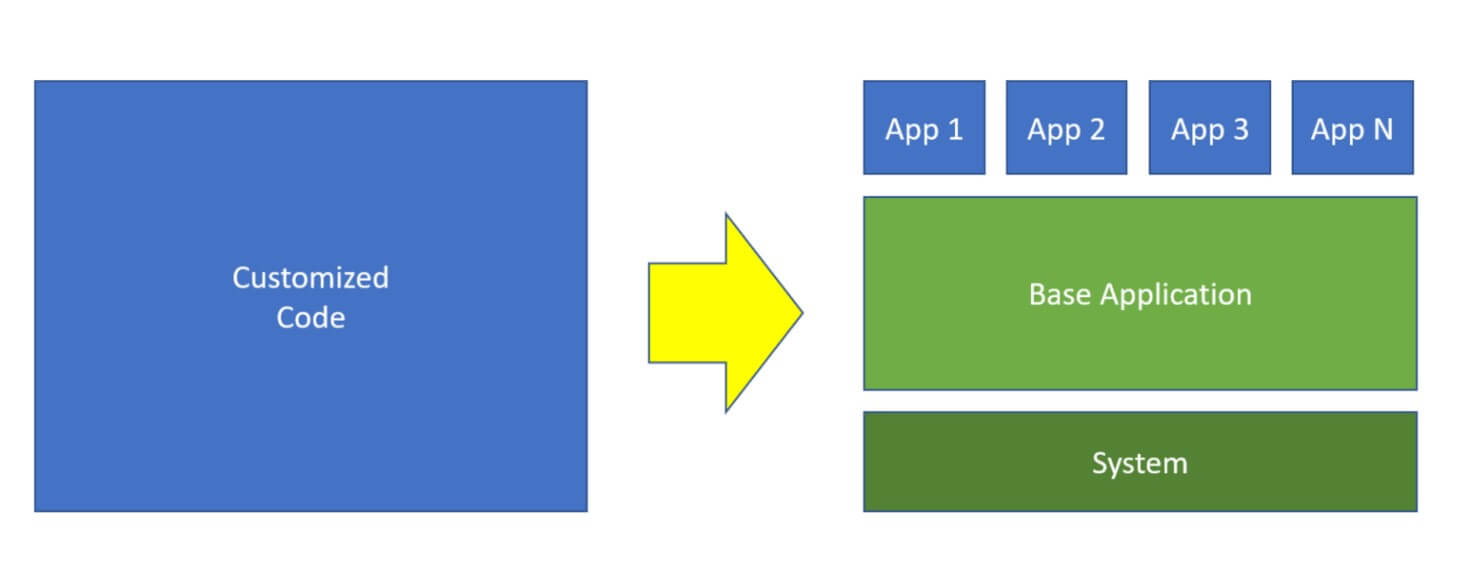

From a developer perspective, Dynamics 365 Business Central is now a ‘layered’ ERP. With Dynamics NAV, you had a set of objects inside your database, and you had the freedom to customize every object as needed. The classical consequence of this was that if you have N customers, in many cases, you have N different ERP solutions. Reusing code was not so easy, and migrating the solution from version X to version Y was always a time-consuming work. Dynamics 365 Business Central introduces the concept of “extensions” or “apps”. With Dynamics 365 Business Central, you don’t write the code directly inside your database. Instead, you use Visual Studio Code and the AL language to create applications and customizations on top of the standard Microsoft base layers. The changes can be summarized as in the following picture:

With Dynamics 365 Business Central you have a System Application and a Base Application. These are the base of Microsoft’s application layer, and you need to write applications and customizations on top of that. That’s a big change!

The second new concept is related to the object’s visibility. With Dynamics NAV and C/AL, the entire set of objects was visible to your customization, because you write your code directly in a unified box. Now, things have changed. In the picture above, App 1 is an isolated world. An extension (as default) can see only objects declared in the extensions specified in the dependency property located in the extension’s manifest file (app.json). Without this, you cannot see objects declared on other extensions. That’s another big change!

Dependency introduces a new aspect that, in my experience, I saw it was often completely forgotten by a traditional Dynamics NAV developer or partner: you need to carefully think, model, and design your solution architecture before start coding!

If you’re a partner that worked with NAV in the past, I’m sure that you know that quite often the process to customize the NAV ERP was:

- assign this customization to a developer (maybe one that works in the application area, involved in the customization, and that’s free at this moment);

- Developer analyzes the problem and starts modifying NAV objects or creating new objects to satisfy the business request;

- modified objects (FOBs) are then moved to the customer’s database.

Now, you cannot work in this way anymore. When you need to create new functionality or customize an existing one, you cannot directly think only on coding, you need to first design your customization to:

- be on top of the Microsoft’s base layers (no modification on the base code);

- carefully plan the dependencies, that your application needs;

- carefully evaluate if the functionalities (or some of them), that you’re creating are needed or useful to other extensions;

- avoid circular dependencies.

That’s another change! I need to spend a lot of time thinking about my “solution architecture”… what a word!

Circular dependencies are a problem that I see occurring quite often to many partners. You start creating extension A, then a new business needs to occur, and you decide to create extension B, which depends on A, in order to have a new module for your application. But after days of development, you discover that on extension A you need to interact with objects, declared on B. A cannot depend on B, and so you’re blocked. As you can see, the design is extremely important now.

I think that designing solutions now is an important point of change for partners. It requires time and knowledge, and it’s a mandatory aspect. Partners should start thinking about renewing their product strategy. You cannot have consultants that work independently on code for different customers, because this is a sign that you’re not consolidating your codebase. I have never trusted partners that acquire developers for the time of a project, and they move them to the customer’s site for months. The new platform is far away from this way of work, start thinking about this.

Another new aspect is related to the code itself: do you want that your solution will be customizable and extendible, from third-party vendors, or directly by yourself for your different customers? If so, you need to write code with this aspect in mind, so:

- use enums instead of Options (forgot Option fields in AL);

- use events in your business logic;

- use patterns for permitting not only to subscribing to events for executing your custom code, but also for disabling standard logic, and enabling your custom logic. The Handled pattern, for example, is extremely important for this, because I’m sure that you will have this requirement in the future.

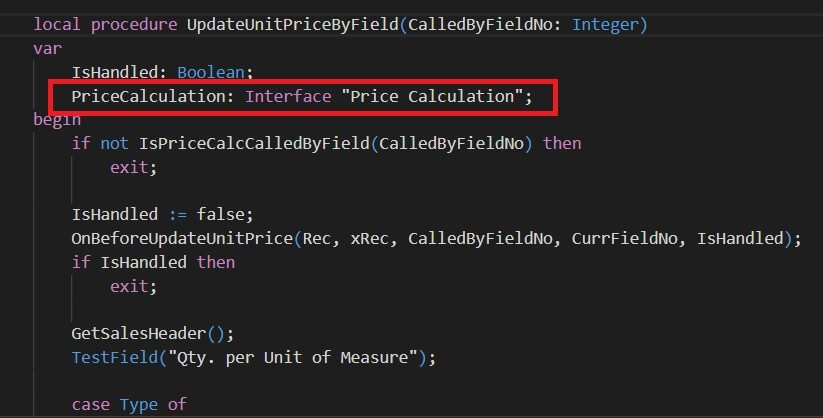

With 2020 Wave 1, start using Interfaces for creating code that can be customized and reusable. I think an interesting example can be provided by checking the new Price Management feature, that will be introduced in the 2020 Wave 1 preview (more info here).

When using events, remember that in a world with multiple apps that could subscribe to the same event, you should architect your code to be able to handle these situations. When you subscribe to the same event, AL handles the subscription with the following order:

- apps with global scope (Microsoft or AppSource apps);

- Per Tenant apps.

And within these groups, the order is based on the appId and dependency order.

But there’s more! Have you started thinking of how to manage your code? Source Code Management practice is a must to have in place nowadays, and it’s an aspect often forgotten for the main part of Microsoft’s ERP partners. Now, every partner should have its SCM policy in place, if you want to handle your code reliably (use branches and GIT repos, I suggest Azure DevOps).

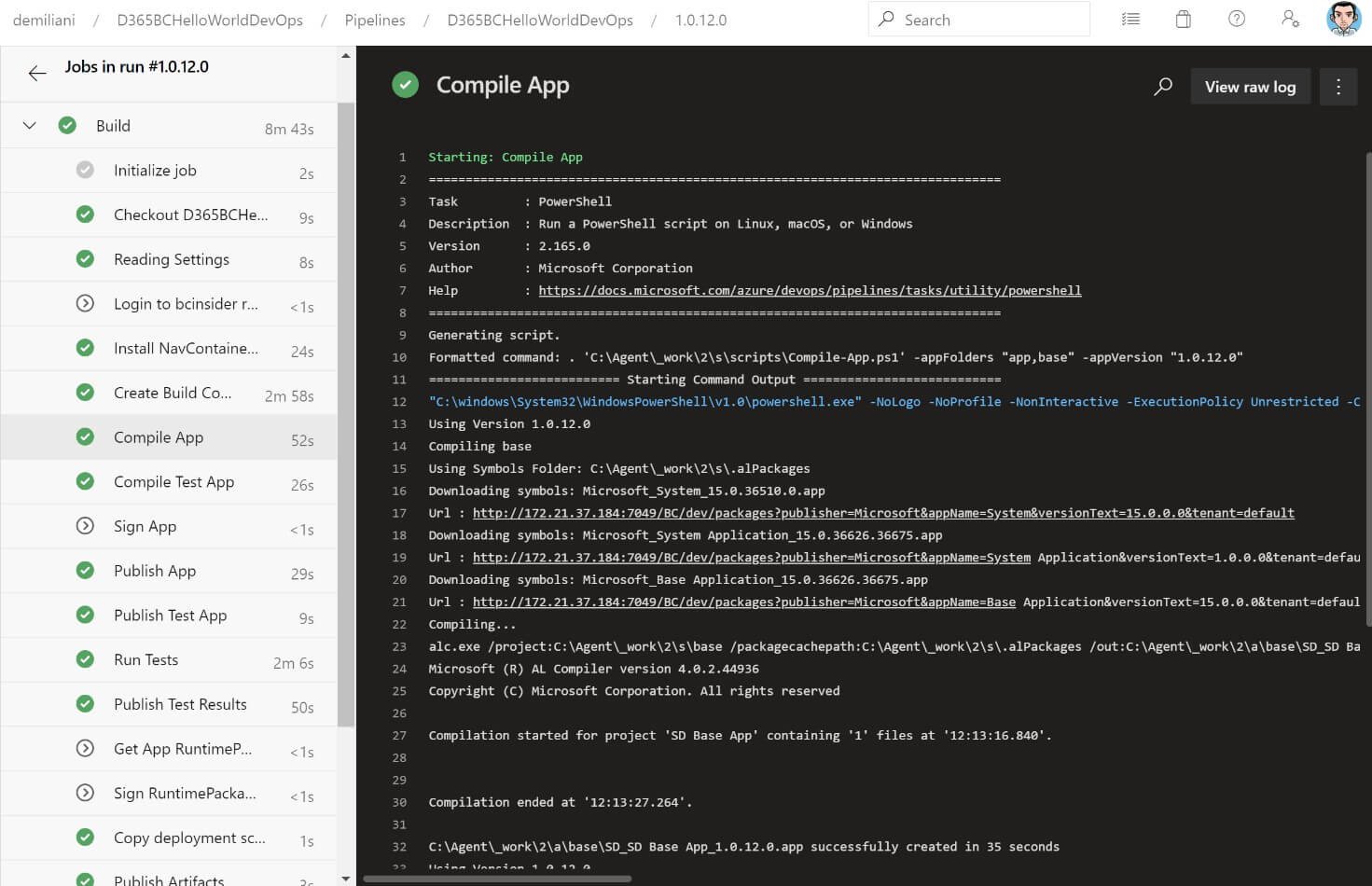

If you work with many different developers that commit the code into the master branch of your solution, how can you be sure that every commit is good to go? And if a commit breaks your master branch? This is something that has to be managed, and the best and more reliable way to do so is to automate this with a build pipeline.

Then, have you start thinking of how to handle the “continuous upgrade” policy that Microsoft has in place for Dynamics 365 Business Central? As I think you already know, Microsoft releases a cumulative upgrade every month, and a major release every 6 months now. If you have N different apps, how can you check if your apps are ready for the new release every month? And how can you test if all your code is working again?

Obviously, you can perform a manual process every month that consists of:

- creating a new Dynamics 365 Business Central environment based on the new version;

- publishing your apps;

- testing your apps.

But all these processes handled manually could be very time-consuming and easily prone to errors. The recommended guideline is to start thinking of adopting tools like Azure DevOps to create build pipelines. On a build pipeline, you can create a policy that builds and tests your code on every commit on the master branch:

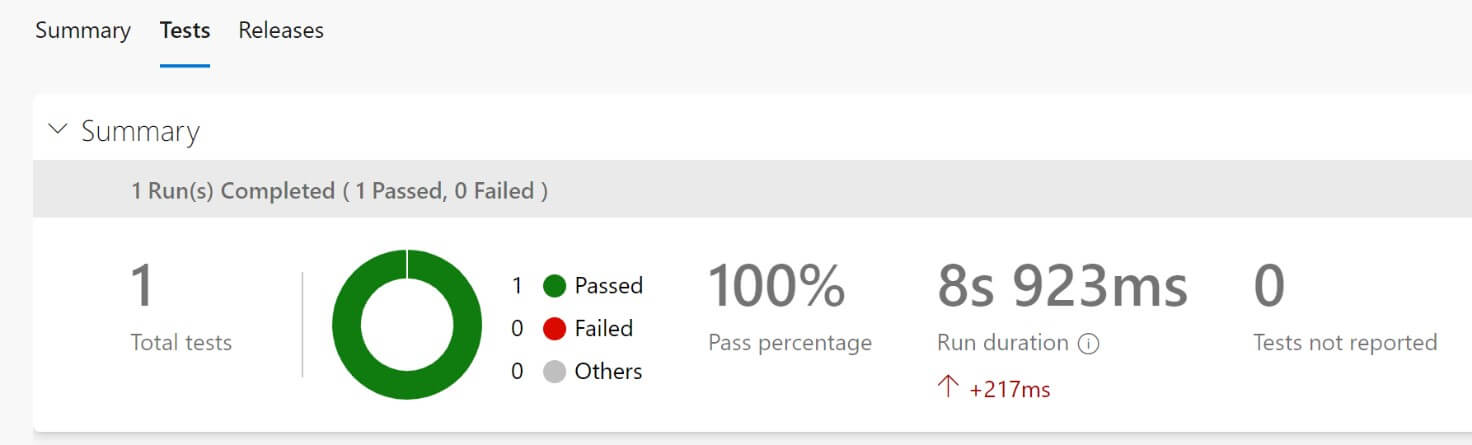

The build pipeline can also execute your tests and then publish the results for you:

In this way, everything is automated, and you don’t have to manually spend time performing these checks. You need to react only if something is broken. That’s a big step forward… You can also automate the releases to your customers by creating a Release pipeline, but that’s another story.

And when you have your apps published to the different customers? How can you check if the customer’s tenants or your apps perform well? How can you monitor the customer’s tenants? In a cloud world, where you could have different customers on different tenants in different countries, this is a need that will emerge soon. Monitoring all tenants manually could be not enough to provide a great service for your customers.

This is why Microsoft has introduced (and will push a lot in the next release) the integration with Azure Applications Insights for monitoring. I’ve talked about this feature here.

Applications Insights is a marvelous tool for centralizing your monitoring. You can have graphical monitoring of your tenant (dashboards), but also a powerful query engine for analyzing telemetry data (by using Azure Log Analytics). Azure Log Analytics uses KUSTO query language (KQL) to query your telemetry data, and the Microsoft D365BC Team is working on releasing a set of pre-defined queries for monitoring.

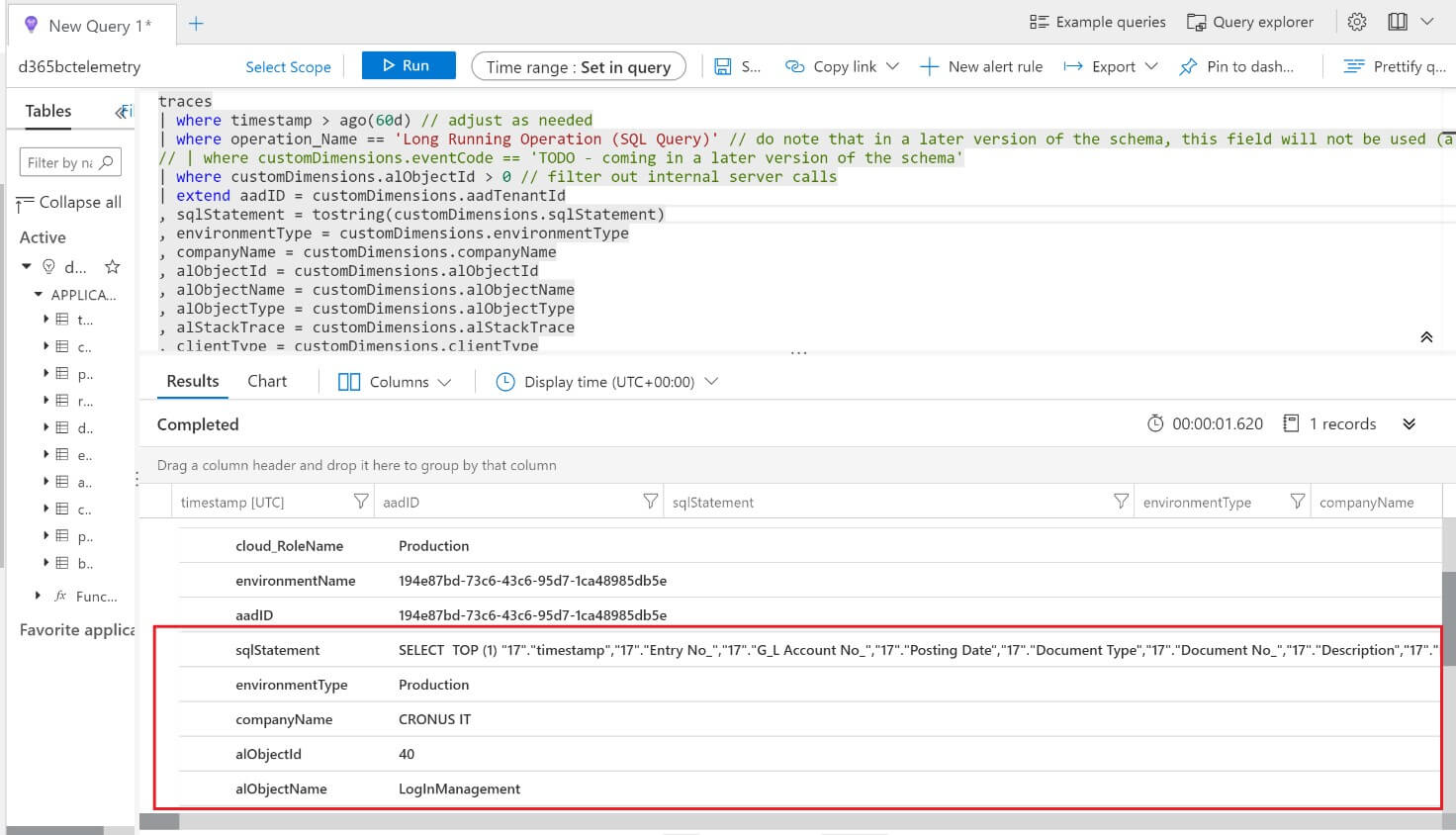

As an example, this is a KQL query for retrieving the long-running queries of a tenant:

[code]

traces

| where timestamp > ago(60d) // adjust as needed

| where operation_Name == ‘Long Running Operation (SQL Query)’ // do note that in a later version of the schema, this field will not be used (a new field in custom dimensions will be used)

// | where customDimensions.eventCode == ‘TODO – coming in a later version of the schema’

| where customDimensions.alObjectId > 0 // filter out internal server calls

| extend aadID = customDimensions.aadTenantId

, sqlStatement = tostring(customDimensions.sqlStatement)

, environmentType = customDimensions.environmentType

, companyName = customDimensions.companyName

, alObjectId = customDimensions.alObjectId

, alObjectName = customDimensions.alObjectName

, alObjectType = customDimensions.alObjectType

, alStackTrace = customDimensions.alStackTrace

, clientType = customDimensions.clientType

, executionTime = customDimensions.executionTime

, executionTimeInMS = toreal(totimespan(customDimensions.executionTime))/10000 //the datatype for executionTime is timespan

, extensionId = customDimensions.extensionId

, extensionName = customDimensions.extensionName

, extensionVersion = customDimensions.extensionVersion

| extend numberOfJoins = countof(sqlStatement, “JOIN”)

, numberOfOuterApplys = countof(sqlStatement, “OUTER APPLY”)

| project-rename environmentName=cloud_RoleInstance

| project-away severityLevel, itemType, customMeasurements, client_Browser, client_City, client_CountryOrRegion, client_IP, client_OS, client_Type, client_StateOrProvince, client_Model , operation_SyntheticSource, operation_ParentId, user_Id, user_AuthenticatedId, user_AccountId , application_Version, sdkVersion, iKey, appId, appName, itemId, itemCount, operation_Name, operation_Id

[/code]

As a result, this is what you can have with Azure Log Analytics:

If something happens on your tenant or for one of your customers that you need to take action on, it is better that the system sends you an alert instead of manually checking the telemetry. Azure Application Insights makes it easy to define such alerts. Just select Alerts and use a KQL query in the condition definition. Now you will receive an email every time the condition is raised, and you have a real-time alert policy for monitoring all your customer’s base in place.

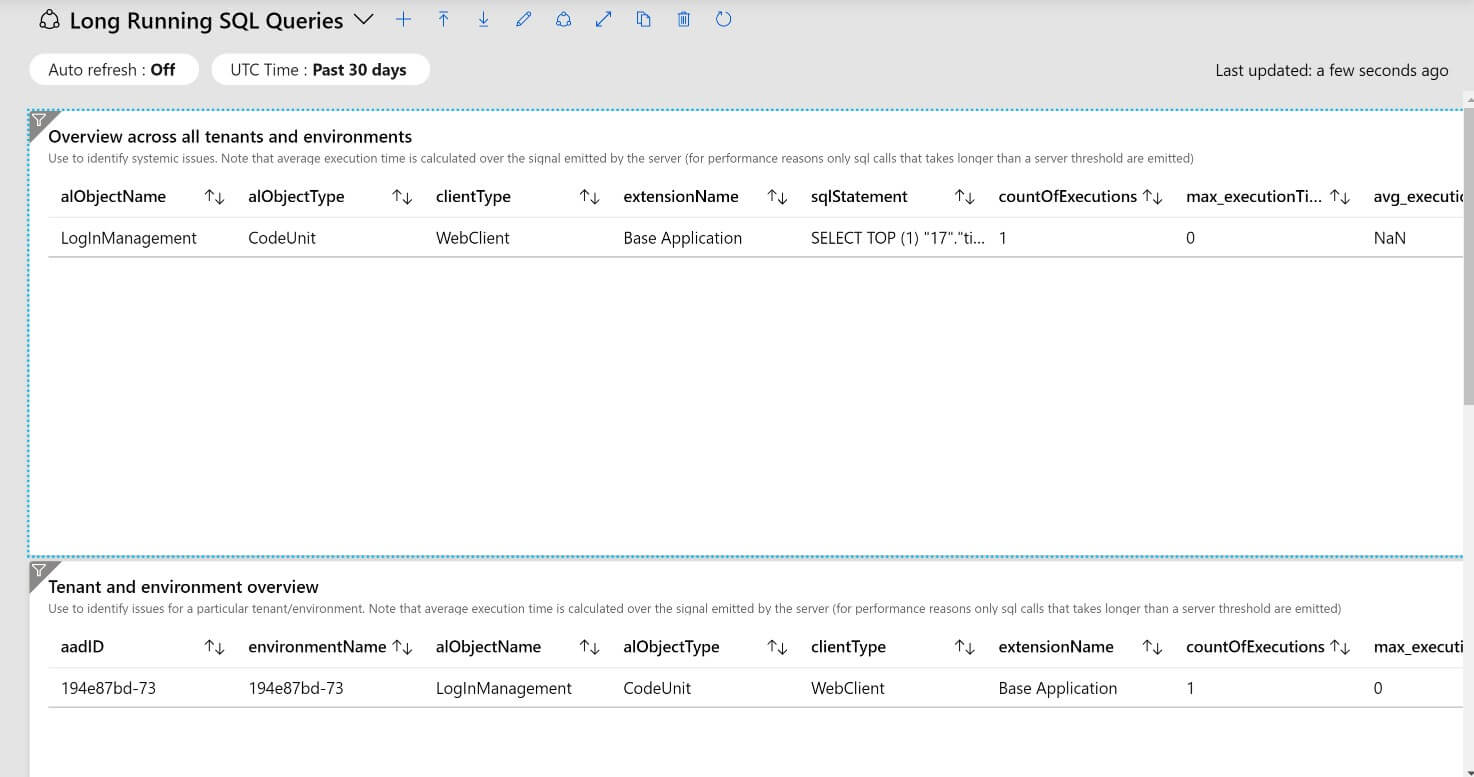

You can also create your custom dashboard (and Microsoft will release pre-defined templates too). As an example, here is my custom dashboard that shows the Long Running SQL Queries of my tenant:

Very powerful, isn’t it?

As you can see, there’s a lot to learn and a huge set of changes that a partner should start adopting to improve its development lifecycle and its way of supporting customers. Start thinking about this… Forget the “AS400 way of work” (yes, I think that many NAV partners come from the AS400 world, and they handle projects nowadays like years and years ago with the great AS400 platform), improve and reorganize your resources, and start thinking of a new development process. The world where you take resources only to turn them to a customer’s site for a project has ended, now, if you want to be a winner, you need to manage your ERP process exactly like in the real (and professional) software world.